AutoGen (Microsoft) Review 2026

Microsoft's open-source framework for building conversational multi-agent systems with human feedback.

Best for: Research teams and enterprises building conversational AI systems

Key Takeaways

- AutoGen pioneered conversational multi-agent AI, but is now in maintenance mode — new features go to Microsoft Agent Framework

- AssistantAgent + UserProxyAgent is the most flexible human-in-the-loop pattern in any agent framework

- AutoGen Studio provides a visual no-code interface for building and testing agent workflows without writing Python

- Sandboxed Docker code execution makes AutoGen uniquely safe for agentic code generation scenarios

- Migration to Microsoft Agent Framework is required for access to new capabilities — plan your path now

What Is AutoGen?

AutoGen is Microsoft Research's open-source framework for building multi-agent AI applications using a conversational paradigm. Unlike frameworks where agents are orchestrated by a central planner or pass structured task objects between each other, AutoGen agents communicate via natural language — they literally talk to each other, debate approaches, request clarification, and produce results through dialogue. This conversational model, unusual at the time of AutoGen's release in 2023, has proven remarkably expressive for complex problem-solving scenarios.

The framework is now in a significant transition. AutoGen v0.4, released in late 2024, rebuilt the core architecture around an async, event-driven model — a meaningful improvement for production reliability. However, Microsoft has simultaneously announced that AutoGen is converging with Semantic Kernel into the Microsoft Agent Framework, and that new features and capabilities will flow to that unified platform rather than to AutoGen. AutoGen itself is in maintenance mode: security fixes and critical bugs will be addressed, but the development frontier has moved on.

This creates a genuine dilemma for new adopters. AutoGen remains a powerful, battle-tested framework with a large community and deep ecosystem. But the honest recommendation for anyone starting a new project today is to evaluate whether to build on AutoGen knowing you'll likely need to migrate to Microsoft Agent Framework for new capabilities, or to start on a framework without this transition overhead. For context on the broader agent landscape, see our guide on how AI agent frameworks work.

Getting Started

AutoGen installs via pip: pip install autogen-agentchat for the core framework, or pip install autogen-ext[openai,docker] for the full extension set including Docker code execution. The v0.4 package structure splits components into focused packages (core, agentchat, extensions, studio) — a clean architecture, though it can cause confusion for users following older v0.2 tutorials.

The minimal example — an AssistantAgent and a UserProxyAgent collaborating on a task — is about 15 lines of Python. AutoGen Studio, the visual companion app, can be launched with autogenstudio ui --port 8080 and provides a drag-and-drop interface for building and testing workflows without any code. Both paths are genuinely accessible.

Key Features in Depth

AssistantAgent and UserProxyAgent

AutoGen's core abstraction is elegantly simple. The AssistantAgent is an LLM-backed agent that reasons, generates code, and produces responses. The UserProxyAgent acts on behalf of a human: it can execute code, provide human feedback when configured to do so, or automatically respond based on predefined patterns.

This two-agent model is deceptively powerful. Because the UserProxyAgent can be configured to automatically execute generated code in a sandbox and return results, a single AssistantAgent + UserProxyAgent pair can autonomously write code, run it, observe the output, debug failures, and iterate — all without human intervention. This is the AutoGen workflow that made the framework famous in late 2023, and it remains one of the most capable patterns in the agent ecosystem.

Human-in-the-loop is first-class. You can configure the UserProxyAgent to pause and request human input at every step, after code execution, or never. The granularity here is unmatched — most frameworks treat human-in-the-loop as an afterthought; AutoGen treats it as a core design parameter.

Async Event-Driven Architecture (v0.4)

AutoGen v0.4 rebuilt the agent runtime around an async, event-driven model based on the actor pattern. Agents are now actors that communicate by sending and receiving typed messages asynchronously. This is a significant improvement over the synchronous blocking model of v0.2, enabling better concurrency, more reliable error handling, and cleaner separation between agent logic and communication infrastructure.

In practice, this means AutoGen v0.4 is considerably more production-worthy than earlier versions. Concurrent agent execution, timeout handling, and graceful failure are now first-class rather than bolted on. The cost is that code written for v0.2 (which represents the majority of tutorials and blog posts as of this writing) needs updates to work with v0.4 — the APIs changed substantially.

AutoGen Studio

AutoGen Studio is a local web UI for building, testing, and iterating on multi-agent workflows without writing Python. You define agents, configure their tools and capabilities, wire them together into teams, and run conversations — all via a visual interface. It's genuinely the most accessible entry point into multi-agent development I've encountered, and it's particularly valuable for non-engineers who want to explore what agentic AI can do without a development environment.

Studio also serves as a debugging tool for engineers. Watching the live conversation between agents — seeing what each agent says, what code it generates, what the execution result was — is far more informative than reading log files. For complex workflows with multiple agents, this observability is a significant advantage.

Docker Sandboxed Code Execution

When AutoGen agents generate and execute code, that code runs inside a Docker container by default — isolated from the host system, with no access to your files, credentials, or network unless you explicitly configure it. This security model is unique among major agent frameworks: most frameworks either run generated code directly on the host (a significant security risk) or require you to implement sandboxing yourself.

For production deployments where agents are generating and running arbitrary code, this built-in sandboxing is invaluable. The container is ephemeral, and outputs are passed back to the agent as structured messages. The tradeoff is that Docker must be running locally, which adds setup overhead in CI/CD environments and cloud deployments.

Multi-Agent Topologies

Beyond the two-agent pair, AutoGen supports group chat configurations where multiple agents participate in a single conversation, each contributing its specialized perspective. A GroupChatManager orchestrates the conversation, selecting which agent speaks next based on configurable strategies (round-robin, LLM-based selection, or custom functions).

This topology handles scenarios like: a research agent drafts a plan, a critic agent challenges its assumptions, a code agent implements a component, and a testing agent validates it — all in a single flowing conversation. The model is expressive, though it can be challenging to control precisely. Unlike LangGraph's explicit graph-based routing, AutoGen's group chat selection is partly emergent, which can lead to unexpected conversation flows in complex setups.

Pricing

AutoGen is free, open source software under the MIT license. There is no hosted platform, no paid tiers, and no execution limits. Your costs are entirely your LLM API costs (OpenAI, Anthropic, Azure OpenAI, etc.) plus your own infrastructure.

This is a double-edged sword: there's no managed platform to simplify deployment, but there's also no vendor lock-in and no usage-based billing surprises. For teams with existing cloud infrastructure and Python expertise, this is a net positive. For teams that want managed deployment without operational overhead, the lack of a hosted offering is a genuine gap — one that CrewAI's hosted platform fills for its framework.

The Maintenance Mode Problem

This deserves its own section because it materially affects any decision to build on AutoGen today. Microsoft has publicly stated that AutoGen is entering maintenance mode and that the forward development path runs through the Microsoft Agent Framework — a convergence of AutoGen and Semantic Kernel announced in late 2024.

What does maintenance mode mean in practice? Security vulnerabilities will be patched. Critical bugs will be fixed. But new features, new agent patterns, new integrations, and new capabilities will not be added to AutoGen. If you build a production system on AutoGen today and need a capability that doesn't yet exist — better memory management, new tool types, improved streaming — you'll need to migrate to Microsoft Agent Framework to get it.

The Microsoft Agent Framework itself is still early-stage as of April 2026. Documentation is fragmented, the API surface is evolving, and the migration path from AutoGen is not yet fully smoothed. This is a genuinely uncertain situation, and the honest answer is that the ecosystem is in flux. For teams with risk tolerance and a stake in the Microsoft stack, betting on Agent Framework makes sense. For teams that want a stable, actively developed framework today, CrewAI or LangGraph are safer bets.

What We Don't Like

Maintenance mode is a real concern: For any new project, the fact that AutoGen's development frontier has moved to Microsoft Agent Framework is a genuine reason to reconsider. You're taking on future migration debt from day one. This doesn't make AutoGen bad software — it's still excellent — but it changes the calculus.

Documentation fragmentation: AutoGen documentation spans GitHub READMEs, Microsoft Research blog posts, the official docs site, and learn.microsoft.com pages for Agent Framework — and these sources don't always agree. The v0.2/v0.4 split makes this worse: a substantial fraction of tutorials and community answers on Stack Overflow apply to v0.2, not v0.4. New users regularly get stuck on version-specific incompatibilities.

Steep learning curve: Despite AutoGen Studio's accessibility, the full framework has a steep learning curve. The actor-based async architecture in v0.4 is conceptually different from synchronous Python most developers are familiar with. Understanding when to use GroupChat vs. nested agents vs. sequential workflows requires real framework experience, not just documentation reading.

Group chat unpredictability: The LLM-based group chat selection strategy — where the manager LLM decides which agent speaks next — can produce unexpected conversation flows. Debugging why a group chat went off-track requires reading the full conversation log, which can be hundreds of messages for complex tasks. Frameworks with explicit routing graphs give you much better observability and control.

Our Verdict

AutoGen earns a 4.0/5 from us — a score that reflects genuine technical excellence tempered by an uncomfortable strategic reality. The conversational multi-agent paradigm, human-in-the-loop support, Docker sandboxing, and AutoGen Studio are all best-in-class features that no other framework matches in combination. If you need those specific capabilities, AutoGen is still the tool to reach for.

The maintenance mode status is the honest deduction. Not because AutoGen is broken — it isn't — but because building production systems on software with a publicly-announced end-of-development timeline carries real technical debt. New adopters should seriously weigh the migration path to Microsoft Agent Framework as part of their architecture decision.

The bottom line: If you have an existing AutoGen deployment, keep running it with a versioned dependency and plan your migration timeline. If you're starting a new project today and value the Microsoft ecosystem, evaluate Microsoft Agent Framework directly rather than AutoGen. If you want a stable, actively developed multi-agent framework, CrewAI or LangGraph are the better starting points.

Pros & Cons

Pros

- Free and open-source from Microsoft

- Excellent for conversational agents

- Human-in-the-loop support

- Strong Azure integration

- Research-backed architecture

Cons

- Steeper learning curve than alternatives

- Documentation can be technical

- Smaller community than LangChain

- Requires solid Python skills

Our Ratings

How AutoGen (Microsoft) Compares

Not sure AutoGen (Microsoft) is right for you? See how it stacks up against alternatives.

Verdict

AutoGen (Microsoft) earns a strong 4.3/5 in our testing. It is a solid choice for research teams and enterprises building conversational ai systems, offering a good balance of features and accessibility.

With a free tier available, there is very little risk in trying it out. If you are evaluating AI frameworks, AutoGen (Microsoft) deserves serious consideration.

Frequently Asked Questions

Is AutoGen free to use?

Is AutoGen still being developed?

What is the difference between AutoGen v0.2 and v0.4?

Does AutoGen require Docker?

How does AutoGen compare to CrewAI?

Sources & References

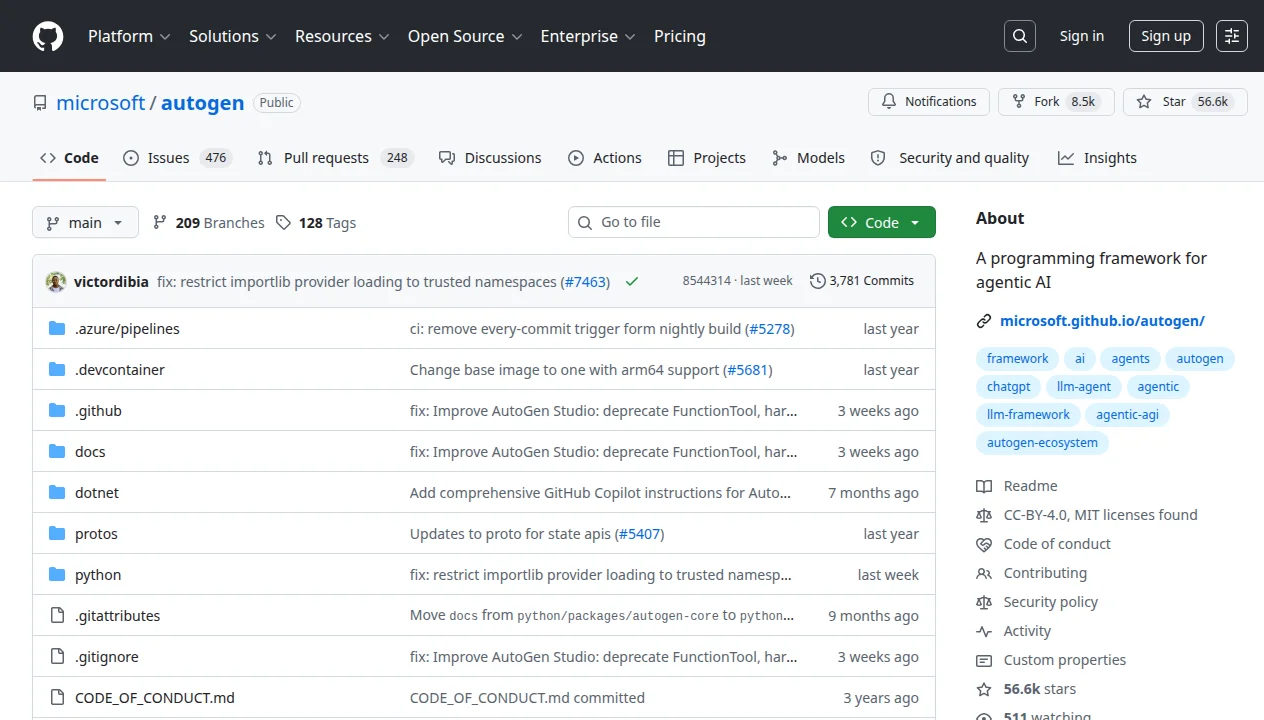

- Microsoft AutoGen GitHub Repository· Official open-source repository, issues, and migration guides

- Microsoft Research — AutoGen Project· Research background and official project status

- AICoolies — AutoGen Review· Independent community review covering strengths and weaknesses

- Microsoft Learn — Agent Framework Overview· Official documentation for the Microsoft Agent Framework successor

- MGX Dev — AutoGen Comprehensive Review· Detailed technical analysis of AutoGen's architecture and capabilities

Written by Marvin Smit

Marvin is a developer and the founder of ZeroToAIAgents. He tests AI coding agents daily across real-world projects and shares honest, hands-on reviews to help developers find the right tools.

Learn more about our testing methodology →Related AI Agents

CrewAI

Open-source framework for orchestrating role-playing autonomous AI agents working together as a crew.

Read Review → →LangGraph

LangChain's graph-based framework for building stateful, cyclic agent workflows with loops and persistence.

Read Review → →AgentGPT

Browser-based autonomous AI agent that breaks down goals into tasks and executes them independently.

Read Review → →